You’ve managed to automate it all away.

Now what?

By automating it all away, I mean you’ve picked off the first layer of SEO and AEO tactics. You’ve published all of your comparison pages, feature pages, listicles, and even a few how tos. You’ve automated brand mention outreach through an agentic outreach system that offers payola or a brand mention swap within your listicles (that look very similar to their listicles). In fact, everything looks like listicles now. You’ve automated the content refresh and internal linking process through something like AirOps.

Now, we can just sit around talking about free will and drinking beer.

Except we don’t drink beer anymore and 2nd order (or nth order) thinking makes it pretty clear that when all is equalized, tactically, there’s no advantage let alone a defensible moat. You’re in a Red Queen race and, despite sprinting very fast, you find yourself right back at the starting line.

We’ve riffed on this idea in a few past newsletter essays. Our Director of Organic Growth Strategy, Ben, summed it up well: “When everyone gains access to the same efficiency, the efficiency itself stops being useful as a differentiator. You’re running faster just to stay in place.”

This essay is about what’s next. Once you’ve picked off the low hanging fruit, where do you look?

Wave One: The Playbook That Already Leaked

Let’s be honest about what wave one AEO looks like.

Structured content formatted for AI extraction. Self-promotional listicles, like “Top 10 Project Management Tools” with your product conveniently ranked first. Automated outreach campaigns to get brand mentions on other listicles. “Entity optimization.” “Schema markup.” Question and answer-first formatting (and “chunking”) so AI systems can pull clean snippets.

In fact, a lot of it does seem to resemble very standard SEO, albeit with some new words and metrics.

Some of these tactics work. They also require very little barrier to entry. Any company with a budget and a content brief template can run this playbook by next Tuesday.

That’s the Red Queen problem. When every competitor runs the same play, the advantage zeros out. You’re not ahead, you’re simply at parity. Hundreds or thousands of brands now optimize for the same citation slots in the same AI-generated answers.

While I wouldn’t avoid doing the foundational, first wave tactics (unless they are risky, spammy, or low expected value), I wouldn’t bet on them to win any distinct market advantage (at least for very long).

In fact, the information ecosystem is so noisy around AEO, I would take many public case studies with a grain of salt.

We’ve looked into many of the publicly available case studies and found, on deeper analysis, that the tactics that “worked” did so for a short time and with weak effect size, often reversed within a matter of weeks. We’ve also run dozens of experiments testing commonly espoused tactics (headers as questions) with no significant effect size on AI search or traditional SEO.

We do not work in isolation.

Marketers are operating in multiple arenas with several different independent players with their own incentives, including your customers and their changing behavior, your competitors and their adoption of new tools or tactics, and the platforms themselves that filter and distribute information. A little game theoretical thinking helps here.

And while there’s often a small window to take advantage of efficiency plays or grey hat tactics, these loopholes usually close in favor of harder to fake signals, namely due to the interplay between user and platform incentives (i.e. platforms do not want to deliver a very bad user experience to users).

Already we see that a significant portion of influence in AI answers comes from off page sources, even for branded queries about a specific product.

Publishing n² content pages doesn’t solve for relevancy, authority, or the trust signals that are robust against model or algorithm changes.

Which means the meaningful game is probably not merely about formatting your own content for AI extraction, though you may gain marginal advantage from doing so if you have a significant content library of unoptimized pages.

The bigger prize is attained from earning mentions from sources AI systems already trust and building what we call “brand gravity” that is robust against tactical waves.

The Platform Incentive Layer

To understand why wave one decays, you need to think critically about what AI platforms (or rather, any platform – search, social, AI, whatever) actually want.

They don’t want to simply reward brands, though “brand” itself is a hard to fake indicator of quality (it’s why people buy Tylenol over generic products).

Generally, they’re seeking to provide utility to the user, which needs to parse intent, quality, credibility, and relevance to the query and user. Their business over the long run depends on user trust, and if citations to bad answers, users leave.

The signals are already visible, and though there are currently several loopholes in optimizing for LLMs, we need to look at where the puck is likely to go. In doing so, we can structure a robust or even antifragile presence against algorithm updates, model updates, and reallocations of value in organic growth programs (e.g. CTR apocalypse).

These platforms will build credibility hierarchies that favor hard-to-fake signals by design, but even without considering platform filtering, these are the tactics that will garner competitive advantage and help you stand out above and beyond the tactics that have been equaled. A good heuristic is usually “if you take away AI search, is it still worth doing?” For the plays I’ll outline below, the answer is a resounding yes.

This isn’t speculation about what platforms might do. It’s what they’re already doing. And like Google’s evolution from PageRank to Helpful Content Updates, the direction only tightens over time.

What’s Hard to Fake?

The biologist Amotz Zahavi proposed the handicap principle in 1975: the peacock’s tail persists because it’s metabolically expensive. Only a genuinely strong bird can afford the waste. The cost is the proof.

In AEO, we can apply similar logic. When every brand can format content for AI extraction, formatting alone stops being a signal (it becomes the price of admission). The signals that remain are the ones that require genuine investment or differentiation. The harder something is to fake, the more durable the competitive advantage.

Four areas stand out right now to me.

Review ecosystems and validated social proof.

G2 announced they will acquire Capterra, Software Advice, and GetApp, consolidating 6 million verified reviews and 200 million annual software buyers onto one platform.

This is a strong positioning play as the trust layer in AI search. Review platforms are becoming the authoritative layer AI systems cite for product comparisons. Getting listed requires product acceptance from the platform. Volume requires real customers. Quality requires actual satisfaction. You can’t prompt your way into 500 five-star G2 reviews. The cost of entry is having a product people genuinely like and a customer base willing to say so publicly.

Creator and influencer networks.

PartnerStack now integrates with AI visibility tools like Evertune to identify creators whose content appears in AI citation sources and broker partnerships between those creators and brands.

The logic is straightforward: if AI systems already cite a creator’s content, and that creator authentically recommends your product, you inherit their citation authority. This is an evolution in affiliate marketing, and it’s one that is currently (likely) underpriced. Many affiliates saw their traffic and thus their revenue decline the past few years, yet their many of these sites are strong and authoritative in influencing AI search. The market hasn’t calibrated the value of these placements in the same way as affiliate links yet.

Outside of that, creator partnerships are relatively untapped for B2B brands. I’m not the first to notice this. But as we see a rise in UGC and social media citations (in B2B, we constantly see LinkedIn and YouTube show up, among other niche players), we see the value of individual creators rising in importance. This was true outside of AI search, but now it’s also an AEO play. Building and deploying influencers, creators, and evangelists is a hard coordinative effort with payback across search, social, AI search, and more.

Community management at scale.

Not your classic “astroturfing on Reddit” play, but a dedicated program with real humans participating authentically in communities where your buyers congregate.

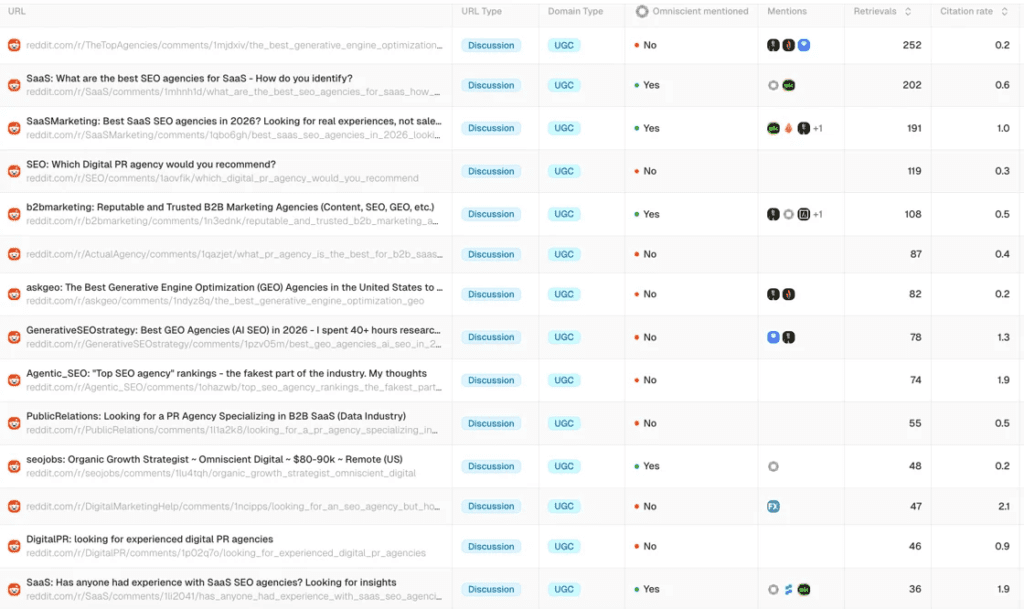

You’ve seen the citation data from Profound, AirOps, etc. – Reddit dominates.

There are, of course, analytical nuances to this that I’ve written about before, but it’s pretty apparent Reddit threads show up in AI search. Are they eminently influenceable? That’s arguable, especially at scale.

But your presence on Reddit is a mirror, an intelligence source, and also a surface area for engagement and, importantly, a surface area that influences your sentiment within AI search.

Of course, Reddit communities are famously allergic to marketing. The signal is authentic participation over months and years: answering questions, sharing expertise, building reputation within the community’s own norms.

I’ll admit to you that, currently, there’s very clearly a large amount of Reddit spam. So it’s clearly game-able and not incredibly hard to do right now.

However, harken back to platform incentives and realize that Reddit, AI engines, and search engines have reasons to tamp down on Reddit spam at its current scale.

It’s also a many-headed hydra for those who want to game it. For example, we had a massive global brand reach out to us that wanted to improve their sentiment (which was extremely low) in AI search. Turns out, there were thousands of Reddit threads complaining about their products. You can try your best, but at that point, it’s a product and customer experience problem.

Thinking outside of Reddit and AI search, peer recommendations are still a major lever when it comes to B2B (and any highly considered) purchase. So it never hurts to map out your communities and influential pools of peers and spend a little time in those communities (possibly even creating your own!).

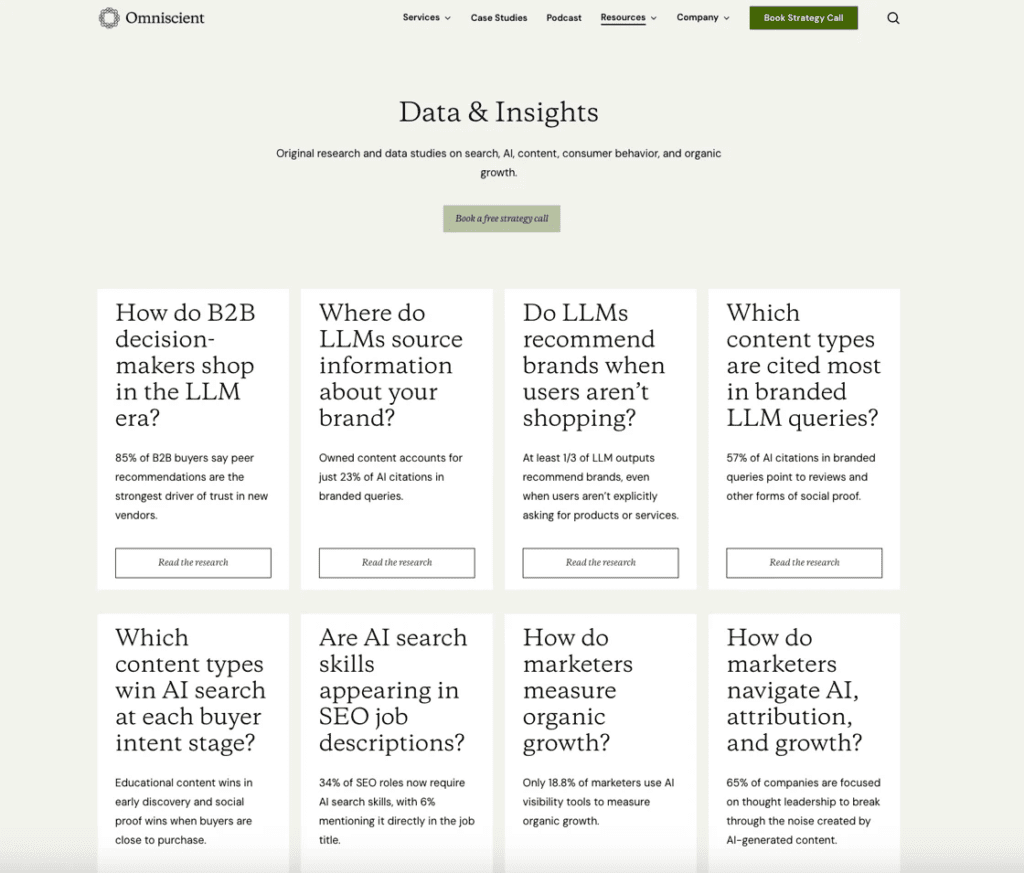

Original research and proprietary data.

You can’t (ethically) prompt a dataset into existence. Original research with real methodology, real sample sizes, and novel findings appear to have greater success in traditional search as well as AI engines.

The investment is visible even at the first layer, but the secondary effects are even greater. Original research is linked to and mentioned, increasing your off page authority. You can repurpose original research into a million different assets, improving your regular content efforts, publishing genuinely interesting content on social media, dripping insights into sales decks and webinars, etc.

The emergent benefit, over time, is you are a brand who is looked at as THE source for insights in your industry.

Some call that “thought leadership.”

Where the Puck Is Heading

Three bets about how this unfolds.

- AI platforms will get better at detecting manufactured signals and optimized-for-AI content. This is the same arms race Google ran for twenty years, e.g. link schemes became detectable, content farms became penalizable, and each round of detection pushed the advantage further toward quality and user experience signals. AI search will follow the same arc, faster.

- The brands that invested early in authority infrastructure such as review presence, creator networks, community participation, original research will have compounding advantages that late movers can’t replicate quickly. These moats don’t have a “catch-up” button. A thousand authentic G2 reviews built over three years can’t be matched in three months.

- AEO will stratify, even taking account for hyper long tail queries and personalization. A small number of brands with deep credibility moats will capture disproportionate citation share, while the long tail of wave-one optimizers competes for scraps. A Matthew effect, just like traditional search: those who have authority get cited more, which builds more authority, which gets them cited more.

The implication for right now: the window to build these moats is open but closing. Every month more competitors recognize that wave one (marked by mass scale AI content, listicles, listicle swaps) is commoditizing and begin investing in wave two.

The Race That Rewards Depth

The Red Queen’s paradox assumes all runners evolve at the same rate. In practice, some runners build advantages that compound while others just run faster on the same treadmill.

Wave one AEO is the treadmill. Necessary to stay in the race, but not sufficient to win it. Wave two is building a different kind of legs, the kind that comes from investment trust signals that are more difficult to displace. Things that signal trust to both human shoppers as well as machine models.

Of course, everything needs to be calibrated with your own market data and context. I’m referencing review sites here, but if you don’t have an adequate spread of product and feature pages from which search and AI engines can pull information, well, prioritize those.

And in some deeper sense, if you spend twice the amount of time focusing on your customers (their journey, pain points, voice of customer phrases) and half the amount of time scanning for new AEO tactics, you’ll probably still end up in a better place that if you had chunked and programmatically scaled your way to a few more citations.

I do hope that in the face of tactical homogeneity, discerning brands pause, reflect, and consider playing the long game and winning the big prize.

Want more insights like this? Subscribe to Field Notes