The first sign that something was wrong came from a single client. AI-driven referral traffic had been climbing through the back half of 2025, then in late January it bent. A slow, steady downward slope.

We made the obvious moves. Checked rankings. Checked publishing cadence. Pulled visibility data from our AI tracking stack to make sure the brand was still being cited in the categories that mattered. Everything looked fine.

Then the second client showed the same pattern. Then the third. Then a fourth. By the second week of March, we saw the same behavior across six different B2B clients in different niches (procurement, security, hosting, telecom, and board software). The dotted trend lines were nearly identical. Every single one was sloping down from January to March.

That’s the moment a client problem becomes a platform problem. And once you start looking at it as a platform problem, the explanation gets a lot more interesting (and less alarming) than it first appeared.

What we expected vs. what we found

Our first hypothesis was that AI visibility had slipped. Maybe a few key prompts had stopped surfacing the brands, or a competitor had shipped better content.

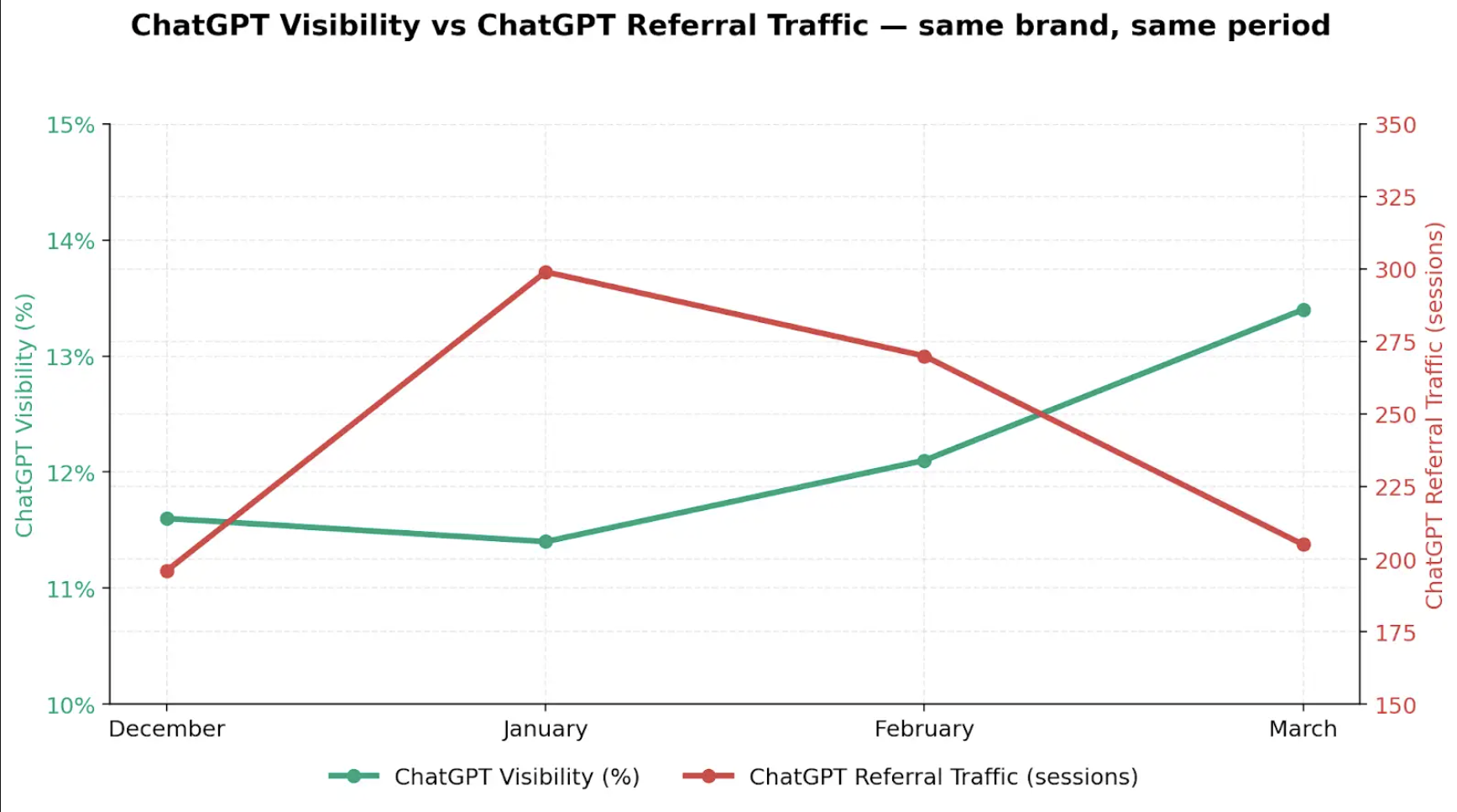

The data said no. Across the portfolio, visibility scores were either flat or up:

- One enterprise SaaS client’s average visibility went from 11.5% to 12.1% MoM, meaning AI cited them slightly more often, even though AI-driven referral visits dropped 64% in the same window.

- A client in the procurement niche saw its AI visibility increase from 13.1% to 21.4% from January to March 2026. Their LLM-attributed conversions dropped roughly 90% in the same period.

- A hosting platform client showed dominant visibility on key prompts, while AI referral sessions declined.

If anything, our clients were being seen more by AI. The clicks just weren’t coming back to the site. Whatever was happening, it was happening between “the AI uses your content” and “the user lands on your page.”

We then broke the AI traffic down by platform, and that’s when the picture became clear. The decline was coming from one platform.

ChatGPT referrals were decreasing, while Gemini, AI Mode, Perplexity, and Copilot were flat or growing across the same clients in the same window.

ChatGPT also accounts for most of all AI referral traffic across our portfolio, which means even a modest drop there shows up as a major drop in aggregate.

The cliff was ChatGPT-shaped. That’s where we started looking next.

What changed inside ChatGPT

The timing pointed straight at OpenAI. The model change and the ad pilot landed in the same window, but only the model change is doing real work in the B2B data we’re seeing. The ads are a structural shift worth watching.

1. GPT-5.3 Instant compressed citations

Firstly, GPT-5.3 Instant became the default model for all ChatGPT users. OpenAI was unusually direct about what they had changed. From their own release notes, the new model is “less likely to overindex on web results, which previously could lead to long lists of links or loosely connected information.”

In plain English: where GPT-5.2 might have surfaced ten or twelve sources at the bottom of an answer, GPT-5.3 Instant often surfaces two. The model was redesigned to synthesize the answer in the chat, not to act as a pass-through to a list of links (a key area where traditional and AI search differ—and why it’s important to invest in optimizing for both).

If your brand was the fourth or fifth citation before, that traffic is gone. SimilarWeb tracked a 15% drop in U.S. visitor referrals from GenAI platforms between October 2025 and January 2026. Our client data is the downstream footprint of that.

GPT-5.5 has since replaced 5.3 Instant as the default. The compression didn’t go with it. Fewer citations per answer is the new normal, not a quirk of one model.

BOFU is taking the hardest hit

Inside the decline, the steepest losses are on BOFU pages, such as pricing, comparison, and product ones. That’s exactly where GPT-5.3’s “answer-in-chat” design hurts the most. When someone asks ChatGPT a BOFU question (“how does product X compare to Y on pricing?” or “what is the best product for Z?”), the model now tries to fully resolve the answer inside the response.

When the model uses our client’s content to resolve the prompt/question in chat, the user gets the answer they came for, and never clicks through to the source. The brand gets cited, but it doesn’t get the visit.

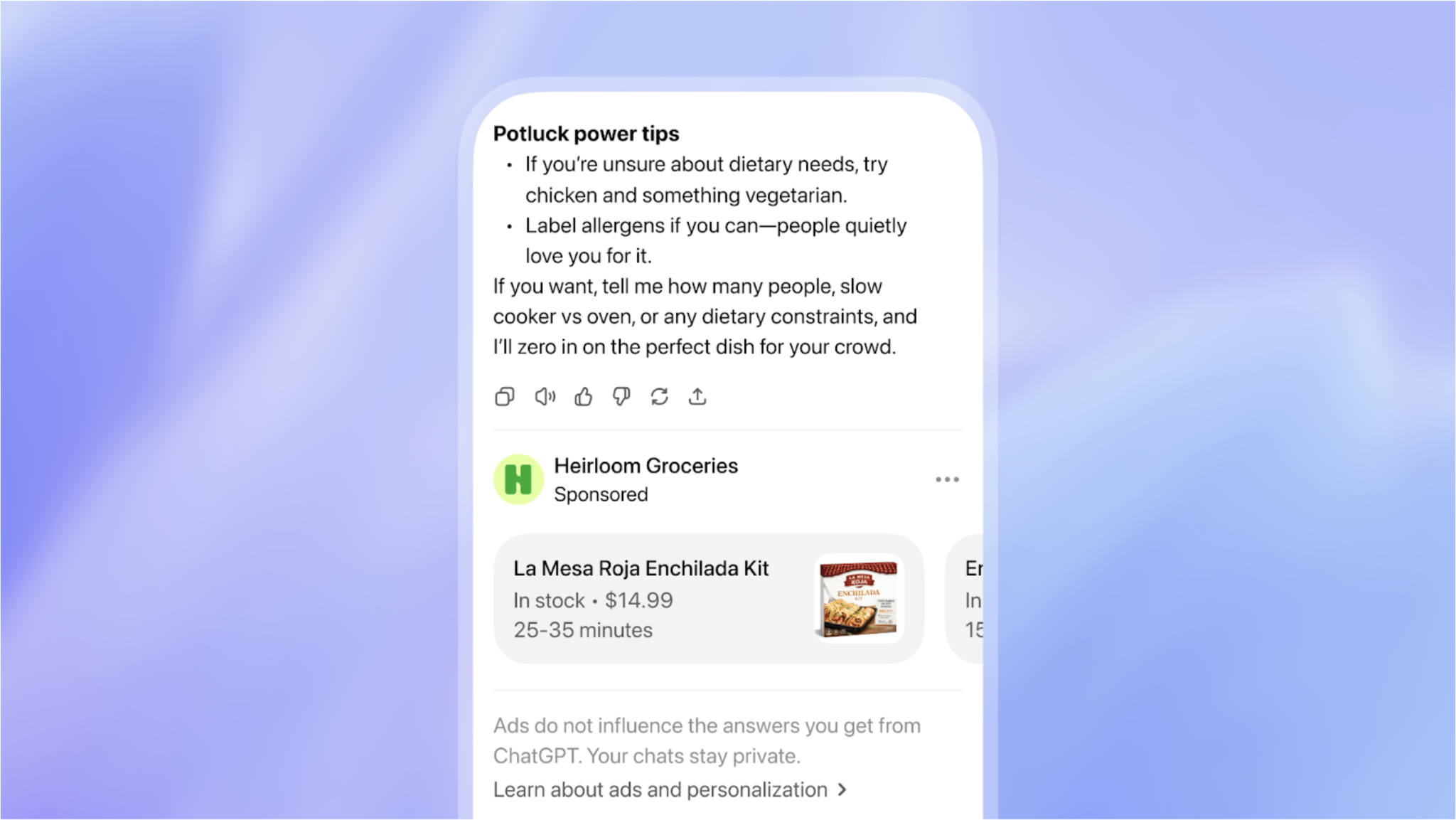

2. The ChatGPT ad pilot

OpenAI also launched an ad pilot inside ChatGPT in the same window, served to U.S. users on the Free and Go tiers.

For now, the impact on B2B is limited, since the pilot is closed, format is consumer-oriented, and there’s no self-serve buying surface for marketers yet. This means most of the categories our clients compete in won’t see ads sitting alongside their citations.

But it’s a structural change worth watching: once a self-serve ad platform exists, organic citations and paid placements will compete for the same screen real estate, and ChatGPT’s referral economics shift again.

Other LLMs are gaining ground

Across our portfolio, Claude, Gemini, Perplexity, and Copilot are all trending up, meaning some of the lost ChatGPT traffic is migrating to other LLMs rather than disappearing entirely.

The important caveat: total LLM-driven sessions are still declining overall. This is a redistribution that reinforces why visibility across all major AI platforms matters, not just in ChatGPT. A brand with strong visibility only on ChatGPT is increasingly exposed to citation compression, UI changes, and model updates that can reduce the AI-driven traffic overnight.

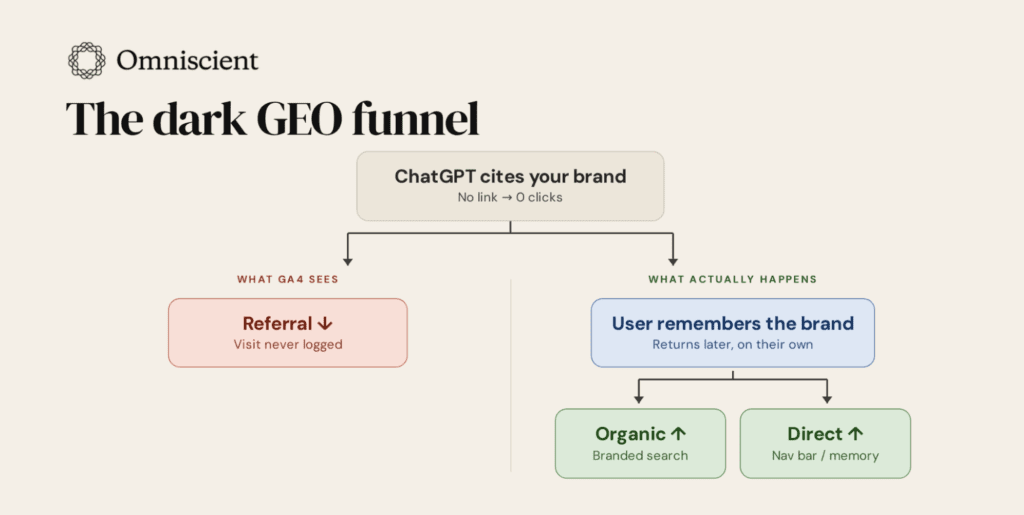

Direct/None and Organic spikes are the same traffic, untracked

While ChatGPT referrals fell across these accounts, “Direct/None” sessions in GA4 climbed across several of the same. That spike isn’t unrelated to the decline. It’s mostly the same traffic, with the referrer stripped.

A couple of mechanisms are doing the work:

- When a user clicks a link inside the ChatGPT or Perplexity mobile app, the embedded browser frequently strips the referrer header. GA4 logs the visit as Direct. A Wheelhouse DMG analysis estimated GA4 may be capturing as little as 9% of true AI-referred mobile traffic.

- You can’t forget the “Google the brand” behavior: A user asks ChatGPT for a recommendation, gets one, opens a new tab, and searches the brand name in the browser or in a search engine. GA4 sees branded organic or direct. The AI started the journey and gets none of the credit, but that doesn’t mean it’s not doing the lion’s share of the work

When you stack these mechanisms, the synchronized GA4 chart starts to look less like a traffic loss and more like a tracking handoff that broke in plain sight.

This is the attribution gap that’s been frustrating marketers for the last year. You can’t fix it inside GA4, because the influence is now happening one step earlier, when the AI generates the answer.

What does this change about how we measure AI?

If you’re still looking at GA4 referral sessions in isolation to understand whether GEO is working for a brand, you’re going to misread the picture. Referral traffic is still a useful signal. What’s changed is that it can no longer carry the weight on its own.

Here’s how we’re shifting AI performance measurement at Omniscient (and how we’d encourage you to think about it):

Referral traffic counts as one input, not the headline

A drop in referrals is an important signal—it just has to be read alongside visibility, share of voice, citations and mentions, which are now the leading indicators. The brands moving in the right direction are the ones whose visibility is strong even when the referral chart looks ugly.

AI is now influencing channels we don’t think of as “AI channels”

Branded organic search and direct traffic lifts when ChatGPT recommends a brand and the user goes to Google it. Direct traffic lifts when AI mobile apps strip referrers. Demo-form sources shift when prospects first meet the brand in an AI answer and only convert weeks later through email or paid. The honest read on AI performance is a holistic one — across every channel where AI’s influence shows up, even when the source/medium doesn’t say so.

Self-reported attribution is crucial

It isn’t the only reliable signal we have, but now it’s the cleanest way to recover the “AI-influenced, GA4-invisible” path. “How did you hear about us?” on demo and lead forms is currently the easiest tactical fix any team can ship to start seeing the AI journeys their analytics are missing. Get with your Sales or MOps team, and add this question today.

GEO strategy gets sharper, not looser

When citation slots compress from 10 to two, the model gravitates toward genuine authority signals: third-party platform presence (G2, Reddit, industry publications), proprietary data, named experts, and original research. Our research shows that 57% of branded query citations go to product and company reviews, listicles, forums, social media, and case studies, and we expect to see this continue as these models get updated. The brands still showing up in those two slots are the ones that built those signals before the answer compression happened.

The synchronized cliff we’ve seen across our book of clients is a sign that the easy version of “AI traffic” is over. The brands that win from here are the ones who read measurement across channels, weigh the right signals together, and invest in the authority that survives citation compression.

If your dashboard says ChatGPT traffic is declining, the problem isn’t your content. The problem is that the dashboard is reading one number in isolation that no longer tells the full story on its own.

Want more insights like this? Subscribe to Field Notes