I recently did a webinar with Jason Widup, Rahul Jain, and Josh Budman from Noble.

We covered a lot of ground on AI search, what’s real, what’s hype, what to actually do about it.

Rather than making you watch the full recording, here’s the distilled version: the questions we tackled and what I think the honest answers are.

What’s the biggest misconception about AI search?

There are many misconceptions, but the two we covered relate to the misunderstanding of what AI search actually is.

There’s a spectrum of misconceptions.

One extreme says AI search is a nothing burger: just do the same SEO work (oh and tack on “also do brand marketing” as an hand-wavy afterthought). The other extreme says everything is new: de-invest from SEO entirely, go all-in on AEO, adopt whatever tactic dropped on LinkedIn this week.

The truth sits in the middle, as it usually does. The ingredients look similar. You’re still publishing and optimizing content. You’re still building off-page trust and verification. But the math is different.

Off-page signals like brand mentions, third-party citations, earned media have moved from “nice to have” to primary driver, and the mechanisms are different. Backlinks and brand mentions, while cosmetically similar, have different utility functions. Brand mentions, especially co-occurring with categorical phrases (e.g. “Nike” next to “running shoes”) build associations with your brand beyond the value of high DR links.

That shift matters because it wasn’t traditionally under SEO’s purview. Most SEO teams don’t own brand mentions, public relations, customer marketing (e.g. review sites like G2), as these are broader marketing activities. Most marketing orgs haven’t built the muscle for it, at least in an integrated and holistic motion.

The related misconception: your website is the holy grail. A lot of AI visibility platforms focused on on-site optimization — restructure your headings, add FAQ schema, format for AI extraction. That created the impression that on-site changes are what move the needle. They’re part of the story. Not the whole story. And probably not even the biggest part for most brands.

Does “only 2% of traffic comes from LLMs” mean AI search doesn’t matter?

This is an attribution problem, not an importance problem.

Data is all over the place, but I’ve seen studies showing Google AI Overviews show up anywhere between 13% and 60% of the time. Many users who see a brand in an AI overview don’t click through it. They’ll often open up a new tab and search for the brand directly, splintering your channel and source attribution. That shows up as organic or direct traffic, not LLM traffic.

The delta at our own company is massive.

Click-based telemetry says 5–10% of traffic is LLM-attributed. Self-reported attribution says 50–70%. That’s not a rounding error. It’s a measurement failure that’s causing people to deprioritize a channel that’s influencing the majority of their pipeline.

It’s also a numerator/denominator trap.

Fewer people click through from LLM answers, so the conversion rate on those clicks looks inflated. But many more people see your brand than ever click. The exposure is enormous. So the talk of “LLMs converting 10X higher” than other channels is also a measurement mishap, not proof of higher intent per se.

The attribution just hasn’t caught up to the behavior.

Related deep dive: How Much Traffic Does ChatGPT Send?

Do brands show up at the top of the funnel in AI engines?

Generally, no.

When someone asks “What is an AI agent?” the LLM answers the question. It doesn’t (usually) recommend vendors. Even before AI search, we deprioritized very high level top-of-funnel content. It was low intent and rarely tied back to meaningful conversions (and usually resulted in brand’s entering what we call the “traffic trap.”)

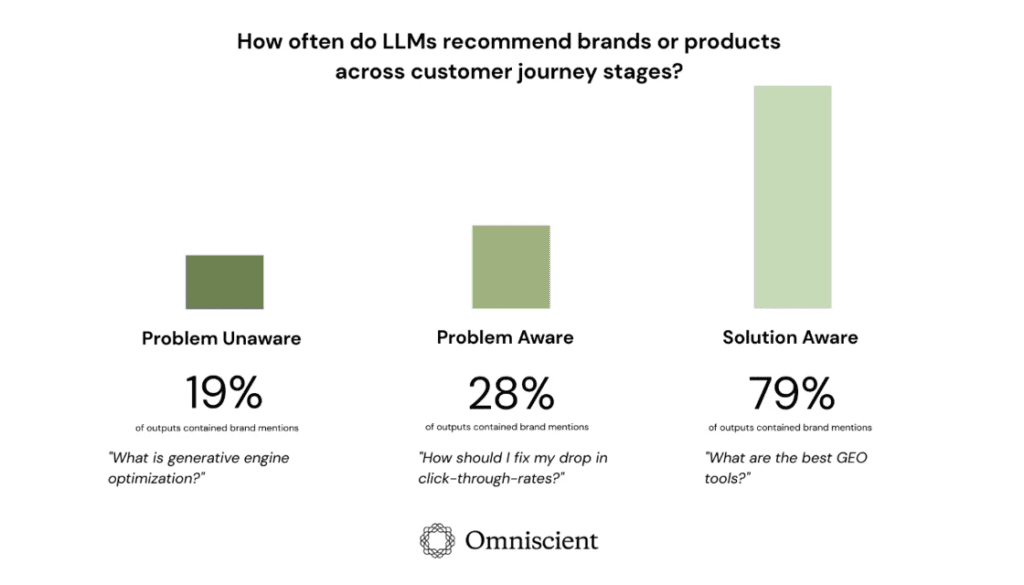

It’s not never, though. Our own research found that up to 1/3 of LLM outputs recommend brands, even when users aren’t explicitly asking for products or services.

But there’s another exception that I think is underexplored related to the top of the funnel, where users are wrapping their heads around a problem.

If you create original research and distribute it well (get it cited across publishers, get it referenced in industry conversations), you can show up at the top of the funnel as a source rather than a vendor. A CMO searching “What are AI overviews doing to traffic?” might encounter your research, creating zero-friction awareness without you ever writing another “ultimate guide.”

My take: original research is in many ways the new top of funnel for B2B. You can see this via organic social as well (not just in AI search). The old model was educational blog posts. The new model is being the cited source behind the education.

What happens at mid-funnel and bottom-funnel?

Mid-funnel is where things get serious.

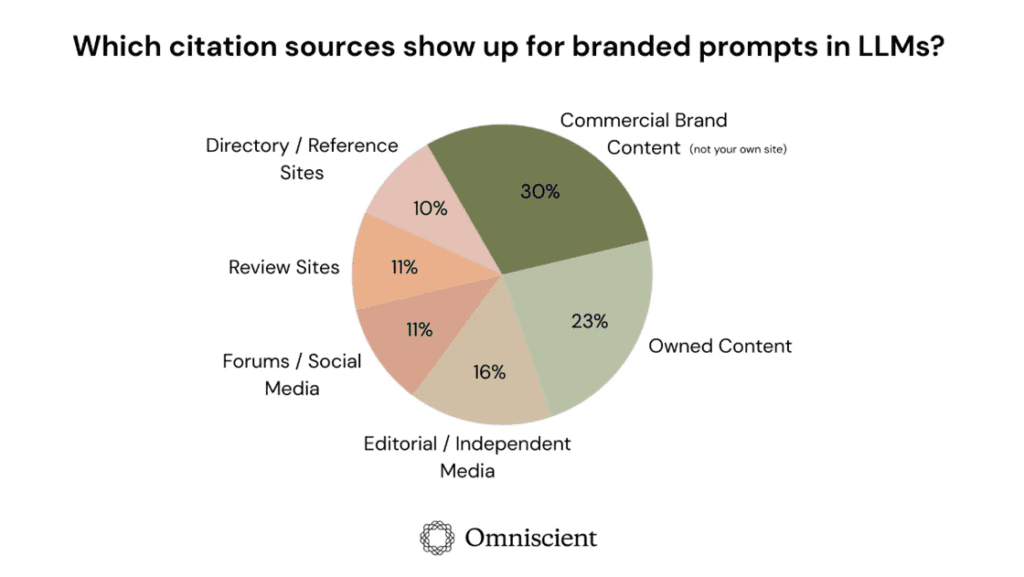

“What’s the best project management tool for remote teams?” — brands show up in the outputs here, giving rise to real brand awareness and customer acquisition potential. Here, 85–95% of citations at this stage come from third-party sources (numbers vary, directional point is that much of it comes from off page sources). Review sites, editorial roundups, comparison posts, niche publications. Not just your website.

Even well-established companies rarely exceed 50% citation share in their category. Off-page dominates at every funnel stage, but mid-funnel is where the gap is widest and the stakes are highest. This is where buyers are building their consideration set.

Bottom-funnel or product/brand aware is more specific, e.g. “How does Attio compare to Day?” “Does HubSpot integrate with Gong?”

Our research on branded searches found 48% earned media sources, 30% commercial content from other sites, and only 23% from the brand’s own website. Even when people search for you by name, most of what the LLM tells them comes from elsewhere.

You don’t fully control your own narrative. What others say about you matters more than what you say about yourself.

What content types actually get cited in AI engines?

This varies a ton depending on the prompts you track. That’s the big old grain of salt you need to take with any public research on AI search.

Here, the long tail seems to matter a lot, and it’s also highly context dependent. In aggregate, studies show the prominence of Reddit, Wikipedia, LinkedIn, but on an individual basis (i.e. for your brand), these sources are rarely the top influential surface areas. Rahul from Noble made this point well: there’s a massive tail of third-party sources that don’t fall under the Reddit/Wikipedia/Quora banner. Niche publications, industry analysts, vertical-specific review sites, comparison pages from publishers you’ve never heard of.

That’s where the opportunity lives. Big competitors often have surprising gaps in these long-tail sources. There are more citation opportunities than traditional SERP analysis would ever surface.

Further deep dive on content types and citation sources: What We Know About Optimizing for LLMs

Does personalization in AI engines make visibility data meaningless?

This was an early objection from the SEO community: everyone gets a different answer, so why bother measuring? Rand Fishkin studied this. Some people treated it as a gotcha, proof that AI search visibility doesn’t matter.

But this stuff works on probability distributions. While individual answers vary, the aggregated distribution shows real patterns. The same brands appear dominant across geographies and contexts despite personalization. The signal is there if you aggregate enough responses, forming a sort of digital mirror of your brand presence against your key competitors. It’s directional, but when tracked well, it’s very useful and actionable.

Another possible practical approach to personalization: the reintroduction of buyer personas.

These will vary by brand, but you could imagine a few industries, titles, and product lines (e.g. for Omniscient we could look at SEO, content, and digital PR, selling to tech, financial services, and healthcare, and targeting titles director, VP marketing, and CMO). Build a measurement grid. Personas (director, VP, CMO) crossed with company stage and industry.

Run prompts across that matrix that best approximate the persona (knowing you’ll never get to the N of 1 personalization or prompt).

You’ll approximate the real distribution well enough to act on. You won’t get individual-level ChatGPT data, but you don’t need to in order to understand, for example, that you’re relatively weak with startup founders in FinTech compared to enterprise SaaS brands.

What should a VP of Marketing do first for AEO/GEO?

Note: this is obviously one of those “it depends” questions, but we answered with as much practical substance as possible.

- Step 1: Audit. Use any prompt tracking tool: Ahrefs Brand Radar, Peec, Profound, whatever. Don’t overthink prompt selection to start. Start with category entry points, turn your keywords into questions, use PAA and query fanouts, talk to your sales team or customer to find key questions, and see where you’re missing.

- Step 2: Gaps almost always appear in the same order. First, owned content gaps, i.e. missing product pages, use case pages, comparison content, core informational assets. If you’re missing the basic set of pages, create those before anything else. Don’t start with “AEO stuff” like FAQ formatting or schema markup. Those are marginal improvements. There are few high signal case studies showing these things work in isolation (i.e. the few credible case studies are on well-known brands with very sophisticated existing SEO programs).

- Step 3: Off-page outreach. Audit what percentage of attainable mentions you currently occupy. If it’s 1%, there’s massive headroom. Leverage personal relationships — reach out to editors and marketing leaders at relevant publishers with a straightforward win-win ask. The order matters: core pages first, off-page second, creative experiments third.

What AEO tactics don’t work?

There’s an abundance of noise and misinformation about AEO, ranging from ineffective but benign, to possibly effective but extremely risky to your brand and long term SEO/AEO presence.

Three things I’d question:

- llms.txt files. Everyone’s implementing these because someone on LinkedIn said to. No solid evidence of positive impact. People latch onto certainty substitutes, things that feel actionable even without proof that they do anything.

- Markdown instruction pages. The concept: write a file telling LLMs “Here’s who we are and what we do.” The problem: tragedy of the commons. If one company does it, maybe it helps. When everyone does it, it’s noise and clearly subject to exploitation and abuse, drastically lowering the user experience of LLMs. Obviously, if a brand could bypass their gestalt brand presence online and just tell LLMs the story they want to tell, everyone would do it.

- Mass AI-generated content. The logic seems sound: AI search personalizes, so create a page for every query variant. But SEO history repeats itself. When companies scaled from 2 pages a month to 2,000 similar pages, they saw an initial spike followed by a crater. The “Mount AI” pattern. Long-term, similar content at massive scale gets zapped. This is a resurgence of old SEO mistakes enabled by new AI tooling.

On black hat tactics: some things work right now that I won’t name. It’s the Wild West. Scrappy marketers will find them. I don’t want to accelerate the arms race.

How will ChatGPT ads change the game?

Full disclosure: both Rahul and I said, effectively, “I don’t know” here. This is speculative territory, though we both answered that we were excited for the potential of more data from the platforms themselves as ads get rolled out.

Right now, organic LLM visibility has a massive measurement gap. If you’re paying for ad placements, you get targeting, metrics, and ROI. That’s exactly what’s been missing, at least for reporting and executive conversations around AI search.

The future scenario worth watching: if apps within ChatGPT enable direct booking from an answer (schedule a demo, start a trial) that becomes a performance channel. At that point, showing up organically in the answer is cheaper than bidding on the ad. Which drives even more investment into organic answer visibility. It also restructures the value of the website, conversion rate optimization, and how we think about the customer journey. It’ll be an exciting change, but obviously with many unknowns.

Neil Patel has a data point floating around (note: massive grain of salt and skepticism here, I could find zero math behind this): a citation is worth $5,292.

Now, I think this number is almost certainly bullshit, either because it is made up entirely or because the variance is so high as to be useless to report the median. I feel a little scrubby even referencing here in this essay.

However, this is the type of data ads rollout will hopefully help us compute.

Either way, it forces a conversation about the ROI of AI search visibility that we haven’t been able to have with clean numbers. I’m watching this closely.

There’s more in the full recording if you want the back-and-forth, the tangents, and the disagreements.

But the short version: the shift is real. The hype is overblown. And the best strategy starts with your own data, not someone else’s benchmark.

Want more insights like this? Subscribe to Field Notes